Overview

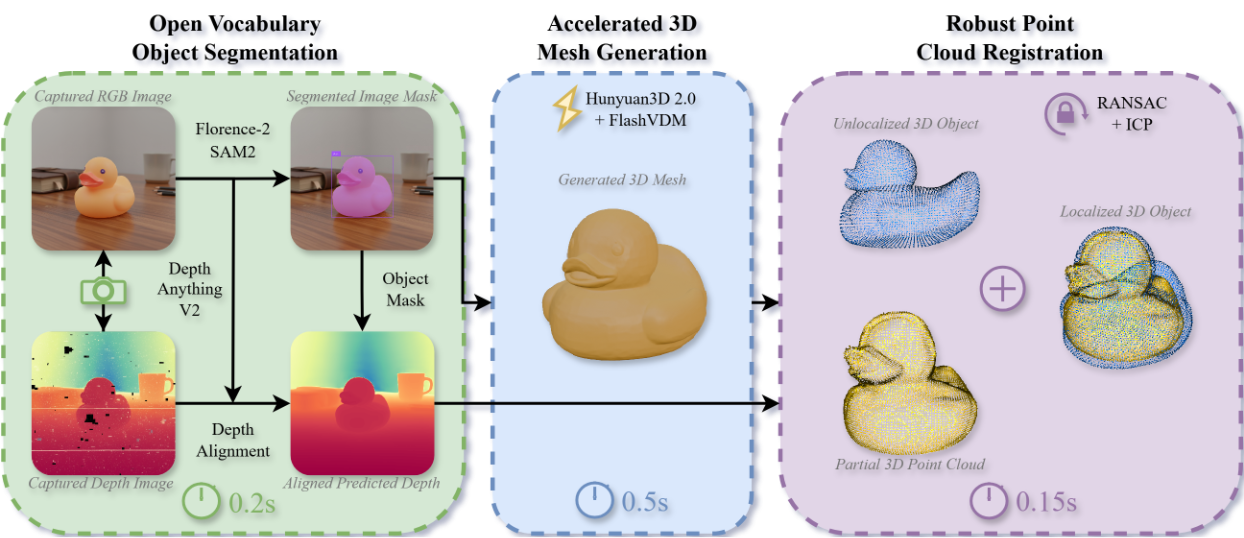

Fig. 1: Our system for sub-second 3D mesh generation from RGB-D input. The system combines three stages: (1) Open-vocabulary segmentation using Florence-2 and SAM2 with depth enhancement via Depth Anything v2 (0.2s), (2) Accelerated mesh generation using FlashVDM-distilled Hunyuan3D 2.0 (0.5s), and (3) Object registration via RANSAC and ICP to align the mesh with observed point cloud (0.15s). The 0.85s total runtime marks a critical step toward real-time robotic applications.

Abstract

Video

Qualitative Results

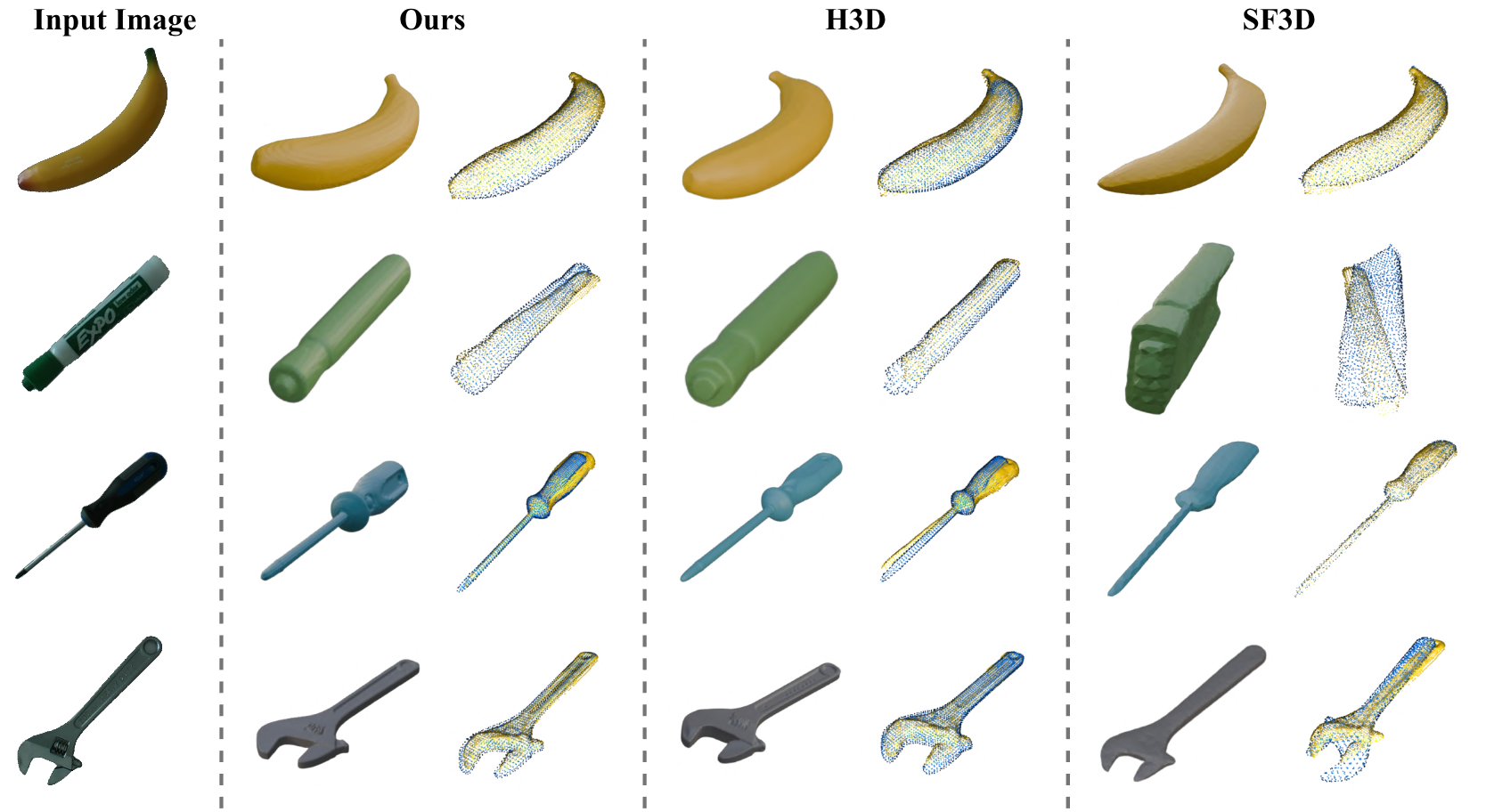

Fig. 2: Qualitative Comparison of Generated Meshes and Registration Results. Our method (left) achieves high geometric quality nearly identical to the slow H3D baseline (middle), while the fast SF3D baseline (right) produces significant artifacts.